Active Collaborations

Past Collaborations

Projects

-

Transformers Concept Net

An large annotated dataset of latent concepts (39K concepts) learned within the representations of various transformer models, annotated using ChatGPT. Released as part of the work in Can LLMs Facilitate Interpretation of Pre-trained Language Models? at EMNLP'23.

Project page -

BERT Concept Net

An annotated dataset of latent concepts learned within the representations of BERT. Released as part of the work in Discovering Latent Concepts Learned in BERT at ICLR'22.

Project page

-

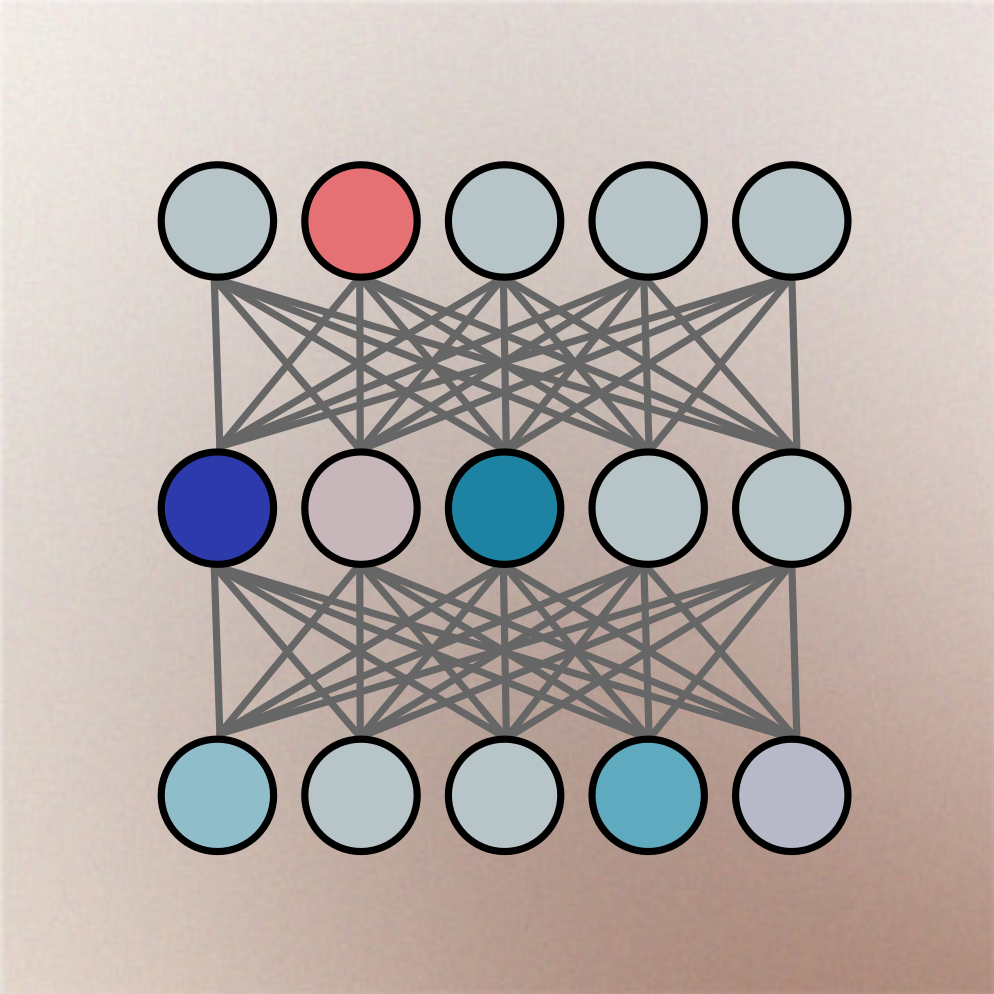

NeuroX Toolkit

A Python library that encapsulates various methods for neuron interpretation and analysis, geared towards Deep NLP models. The library is a one-stop shop for activation extraction, probe training, clustering analysis, neuron selection and more.

-

Model Explorer

A GUI toolkit that provides several methods to identify salient neurons with respect to a model itself or an external task. Provides visualization, ablation, and manipulation of neurons within a given model

Try the Demo -

NxPlain

Explaining model predictions made easy

Try the Demo

Publications

| [1] | Asim Ersoy, Basel Ahmad Mousi, Shammur Absar Chowdhury, Firoj Alam, Fahim I Dalvi, and Nadir Durrani. From Words to Waves: Analyzing Concept Formation in Speech and Text-Based Foundation Models. In Proceedings of the 26th edition of the Interspeech Conference, pages 241--245, Rotterdam, Netherlands, aug 2025. Interspeech 2025. [ bib | DOI | .pdf | .pdf ] |

| [2] | Sabri Boughorbel, Fahim Dalvi, Nadir Durrani, and Majd Hawasly. Beyond the leaderboard: Model diffing for understanding performance disparities in LLMs. In The 2025 Conference on Empirical Methods in Natural Language Processing, 2025. [ bib | http ] |

| [3] | Xuemin Yu, Fahim Dalvi, Nadir Durrani, Marzia Nouri, and Hassan Sajjad. Latent concept-based explanation of NLP models. In Yaser Al-Onaizan, Mohit Bansal, and Yun-Nung Chen, editors, Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 12435--12459, Miami, Florida, USA, November 2024. Association for Computational Linguistics. [ bib | DOI | http ] |

| [4] | Basel Mousi, Nadir Durrani, Fahim Dalvi, Majd Hawasly, and Ahmed Abdelali. Exploring alignment in shared cross-lingual spaces. In Lun-Wei Ku, Andre Martins, and Vivek Srikumar, editors, Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 6326--6348, Bangkok, Thailand, August 2024. Association for Computational Linguistics. [ bib | http ] |

| [5] | Majd Hawasly, Fahim Dalvi, and Nadir Durrani. Scaling up discovery of latent concepts in deep NLP models. In Yvette Graham and Matthew Purver, editors, Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics (Volume 1: Long Papers), pages 793--806, St. Julian's, Malta, March 2024. Association for Computational Linguistics. [ bib | http ] |

| [1] | Shammur Absar Chowdhury, Nadir Durrani, and Ahmed Ali. What do end-to-end speech models learn about speaker, language and channel information? a layer-wise and neuron-level analysis. Computer Speech & Language, 83:101539, jan 2024. [ bib | DOI | http ] |

| [13] | Nadir Durrani, Fahim Dalvi, and Hassan Sajjad. Discovering salient neurons in deep nlp models. Journal of Machine Learning Research, 24(362):1--40, 2023. [ bib | .html | .pdf ] |

| [2] | Yimin Fan, Fahim Dalvi, Nadir Durrani, and Hassan Sajjad. Evaluating neuron interpretation methods of NLP models. In Thirty-seventh Conference on Neural Information Processing Systems, December 2023. [ bib ] |

| [3] | Basel Mousi, Nadir Durrani, and Fahim Dalvi. Can llms facilitate interpretation of pre-trained language models? In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, December 2023. [ bib | .pdf ] |

| [4] | Fahim Dalvi, Hassan Sajjad, and Nadir Durrani. NeuroX library for neuron analysis of deep NLP models. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 3: System Demonstrations), pages 226--234, Toronto, Canada, July 2023. Association for Computational Linguistics. [ bib | DOI | http ] |

| [5] | Fahim Dalvi, Nadir Durrani, Hassan Sajjad, Tamim Jaban, Mus'ab Husaini, and Ummar Abbas. NxPlain: A web-based tool for discovery of latent concepts. In Proceedings of the 17th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations, pages 75--83, Dubrovnik, Croatia, May 2023. Association for Computational Linguistics. [ bib | DOI | http ] |

| [6] | Hassan Sajjad, Fahim Dalvi, Nadir Durrani, and Preslav Nakov. On the effect of dropping layers of pre-trained transformer models. Computer Speech and Language, 77(C):101429, jan 2023. [ bib | DOI | http ] |

| [7] | Firoj Alam, Fahim Dalvi, Nadir Durrani, Hassan Sajjad, Abdul Rafae Khan, and Jia Xu. ConceptX: A framework for latent concept analysis. Proceedings of the AAAI Conference on Artificial Intelligence, 37(13):16395--16397, Sep. 2023. [ bib | DOI | http ] |

| [8] | Ahmed Abdelali, Nadir Durrani, Fahim Dalvi, and Hassan Sajjad. Post-hoc analysis of arabic transformer models. In Proceedings of the Fifth BlackboxNLP Workshop on Analyzing and Interpreting Neural Networks for NLP, Abu Dhabi, United Arab Emirates, December 2022. Association for Computational Linguistics. [ bib ] |

| [9] | Nadir Durrani, Hassan Sajjad, Fahim Dalvi, and Firoj Alam. On the transformation of latent space in fine-tuned NLP models. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, pages 1495--1516, Abu Dhabi, United Arab Emirates, December 2022. Association for Computational Linguistics. [ bib | DOI | http ] |

| [10] | Hassan Sajjad, Nadir Durrani, and Fahim Dalvi. Neuron-level interpretation of deep NLP models: A survey. Transactions of the Association for Computational Linguistics, 10:1285--1303, nov 2022. [ bib | DOI | http ] |

| [11] | Hassan Sajjad, Firoj Alam, Fahim Dalvi, and Nadir Durrani. Effect of post-processing on contextualized word representations. In Proceedings of the 29th International Conference on Computational Linguistics, pages 3127--3142, Gyeongju, Republic of Korea, October 2022. International Committee on Computational Linguistics. [ bib | http ] |

| [12] | Hassan Sajjad, Nadir Durrani, Fahim Dalvi, Firoj Alam, Abdul Khan, and Jia Xu. Analyzing encoded concepts in transformer language models. In Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 3082--3101, Seattle, United States, July 2022. Association for Computational Linguistics. [ bib | DOI | http ] |

| [13] | Fahim Dalvi, Abdul Khan, Firoj Alam, Nadir Durrani, Jia Xu, and Hassan Sajjad. Discovering latent concepts learned in BERT. In International Conference on Learning Representations, may 2022. [ bib | http ] |

| [14] | Nadir Durrani, Hassan Sajjad, and Fahim Dalvi. How transfer learning impacts linguistic knowledge in deep NLP models? In Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, pages 4947--4957, Online, August 2021. Association for Computational Linguistics. [ bib | DOI | http ] |

| [15] | Hassan Sajjad, Narine Kokhlikyan, Fahim Dalvi, and Nadir Durrani. Fine-grained interpretation and causation analysis in deep NLP models. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies: Tutorials, pages 5--10, Online, June 2021. Association for Computational Linguistics. [ bib | DOI | http ] |

| [16] | Fahim Dalvi, Hassan Sajjad, Nadir Durrani, and Yonatan Belinkov. Analyzing redundancy in pretrained transformer models. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pages 4908--4926, Online, November 2020. Association for Computational Linguistics. [ bib | DOI | http ] |

| [17] | Nadir Durrani, Hassan Sajjad, Fahim Dalvi, and Yonatan Belinkov. Analyzing individual neurons in pre-trained language models. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pages 4865--4880, Online, November 2020. Association for Computational Linguistics. [ bib | DOI | http ] |

| [18] | John Wu, Yonatan Belinkov, Hassan Sajjad, Nadir Durrani, Fahim Dalvi, and James Glass. Similarity analysis of contextual word representation models. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 4638--4655, Online, July 2020. Association for Computational Linguistics. [ bib | DOI | http ] |

| [19] | Yonatan Belinkov, Nadir Durrani, Fahim Dalvi, Hassan Sajjad, and James Glass. On the linguistic representational power of neural machine translation models. Computational Linguistics, 46(1):1--52, March 2020. [ bib | DOI | http ] |

| [20] | Nadir Durrani, Fahim Dalvi, Hassan Sajjad, Yonatan Belinkov, and Preslav Nakov. One size does not fit all: Comparing NMT representations of different granularities. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 1504--1516, Minneapolis, Minnesota, June 2019. Association for Computational Linguistics. [ bib | DOI | http ] |

| [21] | Fahim Dalvi, Avery Nortonsmith, D. Anthony Bau, Yonatan Belinkov, Hassan Sajjad, Nadir Durrani, and James Glass. Neurox: A toolkit for analyzing individual neurons in neural networks. In AAAI Conference on Artificial Intelligence (AAAI), January 2019. [ bib | http ] |

| [22] | Fahim Dalvi, Nadir Durrani, Hassan Sajjad, Yonatan Belinkov, D. Anthony Bau, and James Glass. What is one grain of sand in the desert? analyzing individual neurons in deep nlp models. In Proceedings of the Thirty-Third AAAI Conference on Artificial Intelligence (AAAI, Oral presentation), January 2019. [ bib | http ] |

| [23] | Anthony Bau, Yonatan Belinkov, Hassan Sajjad, Nadir Durrani, Fahim Dalvi, and James Glass. Identifying and controlling important neurons in neural machine translation. In International Conference on Learning Representations, 2019. [ bib | http ] |

| [24] | Fahim Dalvi, Nadir Durrani, Hassan Sajjad, Yonatan Belinkov, and Stephan Vogel. Understanding and improving morphological learning in the neural machine translation decoder. In Proceedings of the Eighth International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pages 142--151, Taipei, Taiwan, November 2017. Asian Federation of Natural Language Processing. [ bib | http ] |

| [25] | Yonatan Belinkov, Lluís Màrquez, Hassan Sajjad, Nadir Durrani, Fahim Dalvi, and James Glass. Evaluating layers of representation in neural machine translation on part-of-speech and semantic tagging tasks. In Proceedings of the Eighth International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pages 1--10, Taipei, Taiwan, November 2017. Asian Federation of Natural Language Processing. [ bib | http ] |

| [26] | Yonatan Belinkov, Nadir Durrani, Fahim Dalvi, Hassan Sajjad, and James Glass. What do Neural Machine Translation Models Learn about Morphology? In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (ACL), Vancouver, July 2017. Association for Computational Linguistics. [ bib | .pdf ] |

Media Coverage

Various works from the projects have received coverage from science media

Team

Core Team

Collaborators

Past Collaborators

Opportunities

-

Toolkit Contributions

We are happy to mentor researchers or engineers interested in contributing to our Open-Source toolkit. Follow the link for some ideas or get in touch to suggest your own!

Potential Ideas